Introduction

In my role as a Cloud Solution Architect, I’m often faced with the statement that cloud is expensive. My reply is always that Cloud is not expensive (more expensive than On Premises) if you take into account all the costs involved. As this is an easy statement to make… I made an effort to create a cost comparison for four different scenario’s (in term of deployment size) and stacked “OnPremises” vs “Cloud”.

In this post we’ll discuss this calculation and ensure that we are comparing apples to apples!

Design Decisions

For this calculation, I’ve made several design decisions ;

- In terms of scope, I’m “limiting” this calculation to the deployment of an “internal” (network point-of-view) of “some” virtual machines. We will not be incorporating internet traffic, firewalls, etc.

- For the On Premises setup, we’ll be using Dell equipment. My personal opinion is that they prove to be have a very good price / quality mix for enterprises. As the Hypervisor, we’ll add VMware vSphere to the mix. This as it’s the most commonly deployed Hypervisor in Belgium.

- For the Cloud setup, we’ll use Azure (who would have thought!). 😉

- In regards to pricing, I’ll “limit” myself to publically available list prices. For day rates, I’ll be using a fixed 1.000€ / day. And in terms of managed services costs, I’ll be using the ones I have commonly seen used in the Belgian territory.

- We will assume we are using Linux (ubuntu?) virtual machines to reduce the complexity that licensing might add to the mix.

- For the On Premises hardware, we’ll be using a deprecation period of 5 years (60 Months). The hardware will be leased at a rate of 2%. Support contracts will be foreseen for 5 years with an 24×7 2h on-site SLA.

- We’ll be choosing components that allow us to scale from 0 to a few components. This by using the same building blocks for scaling by “simply” adding another block once our capacity has reached its limits.

Towards the components used, we’ll have the following categories ;

- Compute ; a basic rack server which will serve as our host for the virtual machines

- Storage ; an ethernet network based SAN to provide storage to our virtual machines

- Network ; the basic link between our compute & storage

For each category, we’ll add the following cost aspects ;

- Hardware ; “the iron” / “the devices” (source)

- Housing ; the power, rack space, physical security, … (source)

- Setup ; my personal & modest estimate needed to set up the device

- Operations ; the effort needed to do basic indicent & problem management, healthchecks & monitoring

- Upgrade ; regular upgrades of the system (4x year)

- Software ; the cost for the software (for instance; VMware vSphere)

Compute : Rack Server Bill-of-Material

The rack server will have the following BOM (Bill-of-Material) ;

- Dell R630

- 2x Intel® Xeon® E5-2670 v3 2.3GHz,30M Cache,9.60GT/s QPI,Turbo,HT,12C/24T (120W) Max Mem 2133MHz

- 24x 16GB RDIMM, 2400MT/s, Dual Rank, x8 Data Width

- 2x 120GB Solid State Drive SATA Boot MLC 6Gbps 512n 2.5in Hot-plug Drive

- 1x PERC H330 RAID Controller

- 1x Intel X520 DP 10Gb DA/SFP+, + I350 DP 1Gb Ethernet, Network Daughter Card

- 1x Mellanox ConnectX-3 Dual Port 10Gb Direct Attach/SFP+ Low Profile Network Adapter

- 2x Dell Networking, Cable, SFP+ to SFP+, 10GbE, Copper Twinax Direct Attach Cable, 1 Meter

- 5Yr ProSupport and 4hr Mission Critical

For most who are accustomed with virtualization hosts, this is not an odd configuration. The only odd thing might be the RDMA adapater for some. Though this is a must for me!

Now let’s use this BOM to calculate our costs!

This results in a monthly costs of 1461.17€ for one host.

Compute : Common Pitfalls

The first thing I commonly see going wrong in calculations is the following way of thinking ;

I have a host with 24 cores & 384 GB memory. So now I can have 24 virtual machines on that with 16GB memory each. That would result in a cost per VM of 61€ / month.

The first thing to note here is that enterprises rely on an “N+1”-concept. This meaning that the failure of one node should not affect the whole. So to facilitate this, we need one additional host in our “farm”/”cluster”/”group”/… So if we can put all our VMs on one host, then the N+1 would double that costs. If we would need the capacity of five hosts, then the N+1 would add 20% to the cost.

Another thing to note is the expected & tolerated “usage level”. With the 61€ / VM, we are assuming that each host will be used for 100%. Now does the operational team want all hosts to run at 100%? Indeed. Aside from that, it’s also an illusion to think that at the deployment of a host, the host will be fully utilized. Requests arrive not in full batches, but one-at-a-time. In reality, there will always be a certain loss of capacity per host.

The most common error however is that the cost of a virtual machine is solely linked to the hardware + software. If we would delve into the costs details I used earlier on, then we’ll see the following composition for a compute host ;

In my example, the hardware + software “only” makes up for 31% of the costs. So about 1/3th of the costs is related to the “products”. Though we immediately notice that the “operational” aspects of keeping the device up & running / up-to-date is the biggest chunk. This takes up a bit more than half of the cost!

With my first cost of 61€ / vm, I was using a 1:1 ratio. Does that sound familiar? With compute hosts it’s common to “over provision” cores. The ratio indicates the relation between physical cores (pCore) and virtual cores (vCore). Those virtual cores are the ones you see in your VM… When I do On Premises sizing, I often use a 4:1 ratio. In reality, this means that each VM will be able to use 25% of the physical core on average. This does not take into account peak loads… So there might be contention in regards to the CPU scheduling.

In the above calculation you can see the impact of the amount of virtual machines & memory per VM for several ratios. In addition, I’ve also incorporated a utilization limit (maximum “usage level”) of 90%. This allows us to have some “breathing room” for operational actions.

Storage : iSCSI Storage

For storage, we’ll be using the Dell Equallogic. Why? Because this device allows for an easy “block full, add another block”… This really smoothens things up for comparisons of costs. The iSCSI device will have the following BOM (Bill-of-Material) ;

- Dell Equallogic PS6210X

- 24x 600GB 10K SAS 2.5″ 14.4TB Capacity

- 5Yr ProSupport and 4hr Mission Critical

Why go for the 600GB 10K SAS drives? This was the most commonly used disk size before the “all-flash arrays” arrived. So for a lot of companies who are considering replacing their infrastructure at this moment, this is the most relevant comparison.

So what does this mean in terms of costs?

Which would result in a monthly cost of 1781.42€

Just like with the compute, the majority of the monthly costs are devised from “operational effort”. But what about the cost per GB?

Here we see the concept of the “usage level” (utilization limit) comes back into play… But also the amount of copies we want! We start from a raw capacity of 14,4TB. If we put a mirror (Raid1) on this, then we have two copies of the data. This means that we “lose” 50% of the capacity in terms of redundancy. Now Azure stores three copies, which means in a loss of 66% capacity. This level of redundancy has an impact on the usable capacity, and increases the price per GB!

Network : 10Gbit Switch

The heart of any IT infrastructure is always the network. As a big fan of SDN (software defined networking), it will not surprise you that I selected an ethernet switch for this comparison. The 10Gbit switch which we’ll be using is the following ;

- Dell N4032F

- 24x 10GbE SFP+ Ports

- 2X QSFP+ 40GbE

- 5Yr ProSupport and 4hr Mission Critical

Why go for this one and not a cheaper model? This is the first model that enables us to scale decently at 10GBit port speed and has sufficient uplinks at 40Gbit which would enable us to aggregate/stack the switches.

So what does this mean in terms of costs?

Which results in a monthly cost of 1139.72€

Just like with the compute/storage, the majority of the monthly costs are devised from “operational effort”. But what about the cost per port?

The cost per port is 47.49€ (per month)

Network : The first indicator towards a “startup”-cost

For those who have been looking into detail at the costs of compute & storage. You will have noticed that I had mentioned the port cost per device, though I did not include it in the cost per device. Why? Because in an On Premises setup, we’ll need to buy the entire unit anyhow. This is one of the key fundamental differences between cloud & on premises ;

Where Cloud allows you fine-grained scalability up & down. On Premises is more coarse-grained and typically follows a “forklift”-upgrade pattern.

This has the side effect that a network switch which is sized for 2, 20 or 200 systems is very different in terms of pricing.

Criticism : One-Size-Fits-All

The last remark brings us directly to the biggest criticism that one might have to this costs comparison post… As I’ll be delving into four scenario’s ranging from 16 tot 3000 VMs, some will say that the architecture for 16 VMs and for 3000 VMs is totally different.

And I concur on that area! Though as I said, for this comparison we used a “block full, add another block” philosophy during our component selection.

Compute : Azure T-Shirt sizes

For our scenarios, I chose to work with three types of VMs ;

- Small ; One core with 2GB RAM

- Medium ; Two cores with 4GB RAM

- Large ; Four cores with 16GB RAM

In my humble opinion, these are common sizes for many organizations. So the mapping to Azure VMs for these sizes is something that will look familiar to a lot of you. So how did I map those?

- Small ; F1

- Medium ; F2

- Large ; D12 V2

The last one has the disadvantage that this is over-sized. A D12 V2 has 4 cores, though it has 28GB RAM. So why did I not use the D11? Because it is 2GB short to meet our “Large” requirements.

So be aware that with a bit of optimization, the Azure cost could be reduced. I don’t want you to think that I’m handing out favors to Azure in this calculation. 😉 Which makes that each scenario will be calculated as such for Azure ;

Storage : Standard or Premium in Azure?

As we chose to go for 600GB 10K SAS disks, the equivalent of this in Azure is “Standard”-storage.

Which bares down to a whopping 0,04220€ per GB. I also went for LRS (locally redundant storage), as the On Premises variant did not have a GRS (geographically redundant storage) variant.

Comparison Scenarios : Bring on the contestants!

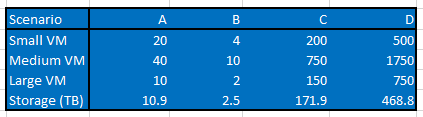

I mentioned that there were four scenario’s ranging from 16 to 3000 VMs… but what are the details of these?

- Scenario A

- Small ; 20

- Medium ; 40

- Large : 10

- Scenario B

- Small ; 4

- Medium ; 10

- Large ; 2

- Scenario C

- Small ; 200

- Medium ; 750

- Large ; 150

- Scenario D

- Small ; 500

- Medium ; 1750

- Large ; 750

Where each VM comes with 160GB of storage. So we have a modest one (A), a tiny deployment (B), a bigger enterprise (C) and a very large enterprise (D).

Cost Comparison : Show me the money!

So how do the costs stack up to each other?

With the small deployments, the Cloud variant obliterates the On Premises variant. Here we can argue that the cost of the network switch causes this. This is partially true, as the network switch throws off the balance. Though when switching this one with a cheaper model, the costs still stand in favor of Azure. When looking towards the large deployments, the costs are comparable to each other. There is not a real winner in that area. Scenario C is give or take the same result (~3% difference). A bigger difference is noticeable with scenario D. Though my personal opinion here is that this is mainly due to the uplift towards the D12 for the large VM. But in the end, fair is fair… The On Premises variant wins that scenario if we would just do a lift and shift without examining the VMs individually.

Closing Thoughts : Myth debunked!

What did we learn today?

The starting point for this blog post was to prove or debunk the myth that Cloud is more expensive than On Premises. I’m quite confident we can all agree that if we take into account all cost aspects, that this myth has been debunked!

Though I am aware that your mileage may vary and that a lot of departments are not accountable for all costs. For example, I’ve come across many organizations where the “housing” costs are typically part of “facilities”. So a move to cloud would decrease another manager’s budget and increase the one of the CIO / IT Manager… In the end, it’s the same balance sheet, but (to quote Nills) “compensation drives behaviour”!

Calculation Details : Roll your own comparison

Want to analyze my calculations in detail? No problem, here is the Excel Document I used as a source for this blog post! Here you can fiddle on your own too to see if certain parameters (which are different for your organization) would tip the calculations in another direction.

Closing Note : Understanding your costs

As a small end note… Over the years I’ve noticed that a lot of organizations are often baffled when we do a deep-dive into IT costs. For a lot of people this is a true eye-opener towards aspects they simply were not aware of. Though my personal opinion is that understanding your cost structure is KEY to any organization. Even if you, as an IT organization, do not indulge into an internal charge-back, then it’s still key to understand this for your budget meetings. Not knowing the origin of your costs will render you’re in a position where you cannot truly manage your department.

Interesting, though as with most things in life… It depends.

The on premise costs can be affected drastically by who designs the end system… An EquaLogic SAN for two nodes?! Please! Really good way to ram up the cost. Thats just poor design.

Ditto by using VMWare… Why not say HyperV, just one example of a free hypervisor! You are then sticking the hypervisor on a pair of SSDs on each host… That’s a lot of costs when for the hypervisor 10k disks would be totally fine.

You need a SAN, no problem… Get a vSAN using local storage on each box such as StarWind vSAN. Can be free if self supported. And the local disks would blow away any performance you would get overthrow iSCSI SAN.

For 30k, you can have two T630s, 11TB SSD local storage on both usable, close to 800 GB ram on each and 8 X 10G ports… A couple of N2048s is all that’s needed switch wise.

Over 5 years, compare what you can do on that kit to a bungalow of VMs on Azure… Far less cost.

Damn autocorrect on some words. But you should get my point.

I’m experimenting with the idea of just using simple non-redundant servers for hybrid to do high compute/ consistent loads with using cloud as a failover and scaling mechanism. Personally, the one time investment on the compute is not a bad deal (when not redundant) and we can even take advantage of the cloud elb to distribute to on prem and cloud as well.

Yeah…our Equallogic PS4100s are still in operation. A few controller repairs and drive replacements and the cost is now amortized over 8+ years, and the performance still massively exceeds anything we see in Azure.

You can also build ghetto VMWare failover with free licenses. Give me two 5-year-old Dell servers, a small network and a decent SAN, and I’ll have your small business making phat stacks with a minimal investment.

People get so wound up doing things the prescribed way. How about a little *creativity*? When I work on cars, I find creative uses for tools never designed for a particular purpose; they work great anyway.

In the era of ubiquitous “enterprise” hardware, this is not rocket science–and it doesn’t have to cost the fortune all the vendors want you to spend.